Biden needs to pe prosecuted, Donald Trump too. All of these guys have committed violence that had nothing to do with defending our homes or the Constitution.

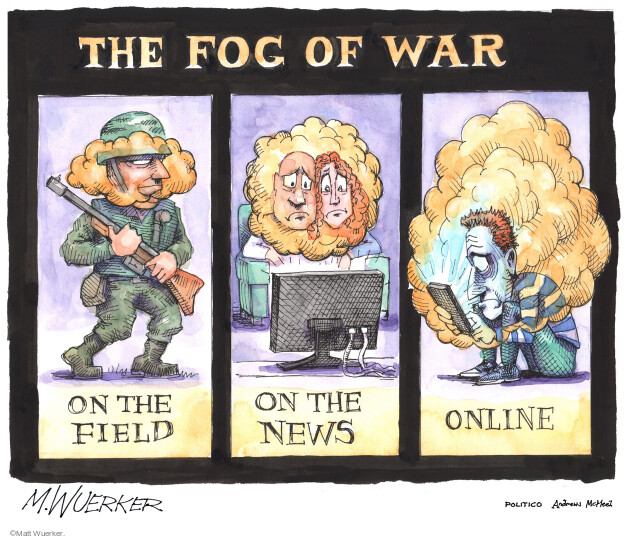

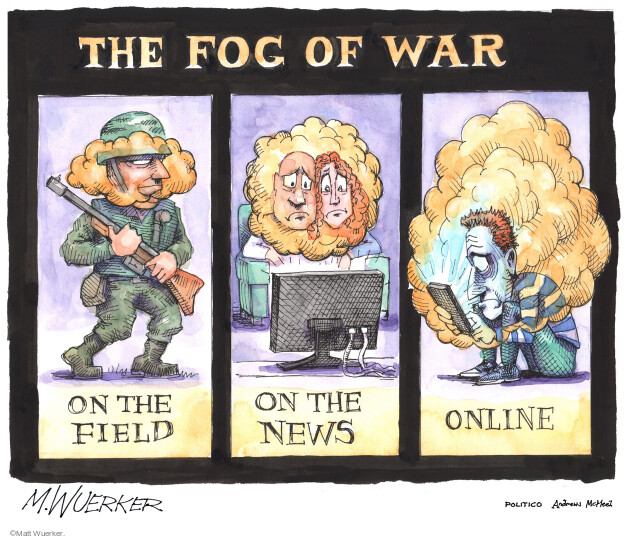

Rise above the propaganda and tribal manipulation to examine the facts. Know where the laws are.

List of other specific laws the US needs accountability to…

https://worldbeyondwar.org/bds-the-u-s-the-world-must-hold-the-u-s-government-to-the-rule-of-law/

————————

David Swanson is an author, activist, journalist, and radio host. He is executive director of WorldBeyondWar.org and campaign coordinator for RootsAction.org. Swanson’s books include War Is A Lie. He blogs at DavidSwanson.org and WarIsACrime.org. He hosts Talk World Radio. He is a Nobel Peace Prize Nominee and U.S. Peace Prize Recipient.

Follow him on Twitter: @davidcnswanson and FaceBook.

Help support DavidSwanson.org, WarIsACrime.org, and TalkWorldRadio.org by clicking here: http://davidswanson.org/donate.

Sign up for these emails at https://actionnetwork.org/forms/articles-from-david-swanson

Wars of aggression are illegal under international law. Treaties ratified by the United States are no different than US law according to the Constitution. Preemptive war is illegal.

It is illegal to wage an aggressive war, aid rebels in a civil war, threaten another nation with aggressive war, and to use propaganda for war.

It is illegal to attack a hospital, destroy civilian food and drinking water supplies, destroy undefended targets, bomb neutral countries, and indiscriminately attack civilians.

It is illegal to use napalm, white phosphorus and depleted uranium as weapons. It is illegal to use chemical and biological weapons. It is illegal to fail to accept the surrender of combatants, it is illegal to pillage, to fail to attend to the wounded, to have extrajudicial executions. It is illegal to fail to discipline or prosecute subordinates who commit war crimes. It is illegal to fund war mercenaries.

There are more, but I think you get the idea…

There are simple truths. Some, which we are taught to ignore. The people in power teach us to dehumanize other peoples, in order to make the killing easier or more efficient.

Simple truth: People have human rights. Nations have sovereignty.

Aggressive warfare is a violation of law. A nation must be under attack to use self defense, and still needs to work with the UN Security Council.

Why would people of other nations have any less rights and responsibilities or protections than we enjoy? We would be better off in a world where there was liberty and justice for everyone, not just protections for those on the side of red, white and blue.

Charter of the United Nations, Chapter VII — Action with respect to Threats to the Peace, Breaches of the Peace, and Acts of Aggression

Convention II Article 2 Geneva Conventions

“In addition to the provisions which shall be implemented in peacetime, the present Convention shall apply to all cases of declared war or of any other armed conflict which may arise between two or more of the High Contracting Parties, even if the state of war is not recognized by one of them.

The Convention shall also apply to all cases of partial or total occupation of the territory of a High Contracting Party, even if the said occupation meets with no armed resistance. Although one of the Powers in conflict may not be a party to the present Convention, the Powers who are parties thereto shall remain bound by it in their mutual relations. They shall furthermore be bound by the Convention in relation to the said Power, if the latter accepts and applies the provisions thereof. ”

Wikipedia: “In international law, the term convention does not have its common meaning as an assembly of people. Rather, it is used in diplomacy to mean an international agreement, or treaty.

With two Geneva Conventions revised and adopted, and the second and fourth added, in 1949 the whole set is referred to as the “Geneva Conventions of 1949” or simply the “Geneva Conventions”. Usually only the Geneva Conventions of 1949 are referred to as First, Second, Third or Fourth Geneva Convention. The treaties of 1949 were ratified, in whole or with reservations, by 196 countries.[1] ”

Example specific to Syria that apply beyond those borders also.

“…The supply of arms to the Syrian opposition would amount to a breach of the customary principle of non-intervention and the principle of non-use of force under Art. 2 para. 4 of the UN Charter.”

The supply of arms to opposition groups in Syria and international law

Is it legal to supply arms to Syrian rebels?

“International law prohibits states from intervening in the affairs of other states. Commenting upon and discussing situations in other states is not caught by this prohibition, but actions of a coercive nature are. The use of force is arguably the most obvious form of such coercion, whether manifested by direct intervention through the use of a state’s own military forces or indirectly through the provision of arms and training to opposition forces.

The only two established exceptions to the prohibition of the use of force in international law are actions taken in self-defence and those taken under the authorisation of the UN Security Council…”

Then there is the use of torture and assassinations. Illegal torture continues in secret and openly by our troops. The Joint Chiefs of Staff has a list compiled under the direction of now President Obama of Americans who shall be assassinated. One person they have admitted to being selected for assassination is Anwar al-Awlaki.

Congress has the power to declare war not the President.

About 90% of those killed when we wage war are now unarmed civilians regardless of the claims made by our leaders about our “precision” weaponry and great technology. They are also bringing this war mentality home, and the criminals will need to because eventually Americans will stand up to the tyrants.

The Posse Comitatus Act (and Title 10 of the United States Code) prohibits members of the US military from exercising law enforcement powers on non-federal property within the United States. The John Warner National Defense Authorization Act allowed the use the Armed Forces in major public emergencies after hurricane Katrina, but was repealed in 2008 reverting to Posse Comitatus Act and The Insurrection Act of 1807. (The Insurrection Act of 1807, in the opinion of a number of Constitutional scholars is unconstitutional and would be found so if they ever bothered to test it in court.)

America’s Economic Blockades and International Law

“Military blockades are acts of war, and therefore subject to international law, including UN Security Council oversight. America’s economic blockades are similar in function and outcome to military blockades, with devastating consequences for civilian populations, and risk provoking war. It is time for the Security Council to take up the US sanctions regimes and weigh them against the requirements of international law and peacekeeping…”

So much for accountability. August 2013

Obama Gives Bush “Absolute Immunity” For Everything

Instances of the United States overthrowing, or attempting to overthrow, a foreign government since the Second World War

International humanitarian law – Wiki

US Has Killed More Than 20 Million In 37 Nations Since WWII

By James A. Lucas, www.countercurrents.org

There is a long history of war crimes and violence for profit since WW2.

#Numbers #Dead

“After the catastrophic attacks of September 11 2001 monumental sorrow and a feeling of desperate and understandable anger began to permeate the American psyche. A few people at that time attempted to promote a balanced perspective by pointing out that the United States had also been responsible for causing those same feelings in people in other nations, but they produced hardly a ripple. Although Americans understand in the abstract the wisdom of people around the world empathizing with the suffering of one another, such a reminder of wrongs committed by our nation got little hearing and was soon overshadowed by an accelerated “war on terrorism.”

But we must continue our efforts to develop understanding and compassion in the world. Hopefully, this article will assist in doing that by addressing the question “How many September 11ths has the United States caused in other nations since WWII?” This theme is developed in this report which contains an estimated numbers of such deaths in 37 nations as well as brief explanations of why the U.S. is considered culpable.

The causes of wars are complex. In some instances nations other than the U.S. may have been responsible for more deaths, but if the involvement of our nation appeared to have been a necessary cause of a war or conflict it was considered responsible for the deaths in it. In other words they probably would not have taken place if the U.S. had not used the heavy hand of its power. The military and economic power of the United States was crucial.

This study reveals that U.S. military forces were directly responsible for about 10 to 15 million deaths during the Korean and Vietnam Wars and the two Iraq Wars. The Korean War also includes Chinese deaths while the Vietnam War also includes fatalities in Cambodia and Laos.

The American public probably is not aware of these numbers and knows even less about the proxy wars for which the United States is also responsible. In the latter wars there were between nine and 14 million deaths in Afghanistan, Angola, Democratic Republic of the Congo, East Timor, Guatemala, Indonesia, Pakistan and Sudan.

But the victims are not just from big nations or one part of the world. The remaining deaths were in smaller ones which constitute over half the total number of nations. Virtually all parts of the world have been the target of U.S. intervention.

The overall conclusion reached is that the United States most likely has been responsible since WWII for the deaths of between 20 and 30 million people in wars and conflicts scattered over the world.

To the families and friends of these victims it makes little difference whether the causes were U.S. military action, proxy military forces, the provision of U.S. military supplies or advisors, or other ways, such as economic pressures applied by our nation. They had to make decisions about other things such as finding lost loved ones, whether to become refugees, and how to survive.

And the pain and anger is spread even further. Some authorities estimate that there are as many as 10 wounded for each person who dies in wars. Their visible, continued suffering is a continuing reminder to their fellow countrymen.

It is essential that Americans learn more about this topic so that they can begin to understand the pain that others feel. Someone once observed that the Germans during WWII “chose not to know.” We cannot allow history to say this about our country. The question posed above was “How many September 11ths has the United States caused in other nations since WWII?” The answer is: possibly 10,000.

Comments on Gathering These Numbers

Generally speaking, the much smaller number of Americans who have died is not included in this study, not because they are not important, but because this report focuses on the impact of U.S. actions on its adversaries.

An accurate count of the number of deaths is not easy to achieve, and this collection of data was undertaken with full realization of this fact. These estimates will probably be revised later either upward or downward by the reader and the author. But undoubtedly the total will remain in the millions.

The difficulty of gathering reliable information is shown by two estimates in this context. For several years I heard statements on radio that three million Cambodians had been killed under the rule of the Khmer Rouge. However, in recent years the figure I heard was one million. Another example is that the number of persons estimated to have died in Iraq due to sanctions after the first U.S. Iraq War was over 1 million, but in more recent years, based on a more recent study, a lower estimate of around a half a million has emerged.

Often information about wars is revealed only much later when someone decides to speak out, when more secret information is revealed due to persistent efforts of a few, or after special congressional committees make reports

Both victorious and defeated nations may have their own reasons for underreporting the number of deaths. Further, in recent wars involving the United States it was not uncommon to hear statements like “we do not do body counts” and references to “collateral damage” as a euphemism for dead and wounded. Life is cheap for some, especially those who manipulate people on the battlefield as if it were a chessboard.

To say that it is difficult to get exact figures is not to say that we should not try. Effort was needed to arrive at the figures of 6six million Jews killed during WWI, but knowledge of that number now is widespread and it has fueled the determination to prevent future holocausts. That struggle continues.

The author can be contacted at jlucas511@woh.rr.com

continued…

37 VICTIM NATIONS

Afghanistan

The U.S. is responsible for between 1 and 1.8 million deaths during the war between the Soviet Union and Afghanistan, by luring the Soviet Union into invading that nation. (1,2,3,4)

The Soviet Union had friendly relations its neighbor, Afghanistan, which had a secular government. The Soviets feared that if that government became fundamentalist this change could spill over into the Soviet Union.

In 1998, in an interview with the Parisian publication Le Novel Observateur, Zbigniew Brzezinski, adviser to President Carter, admitted that he had been responsible for instigating aid to the Mujahadeen in Afghanistan which caused the Soviets to invade. In his own words:

“According to the official version of history, CIA aid to the Mujahadeen began during 1980, that is to say, after the Soviet army invaded Afghanistan on 24 December 1979. But the reality, secretly guarded until now, is completely otherwise. Indeed, it was July 3, 1979 that President Carter signed the first directive for secret aid to the opponents of the pro-Soviet regime in Kabul. And that very day, I wrote a note to the President in which I explained to him that in my opinion this aid was going to induce a Soviet military intervention.” (5,1,6)

Brzezinski justified laying this trap, since he said it gave the Soviet Union its Vietnam and caused the breakup of the Soviet Union. “Regret what?” he said. “That secret operation was an excellent idea. It had the effect of drawing the Russians into the Afghan trap and you want me to regret it?” (7)

The CIA spent 5 to 6 billion dollars on its operation in Afghanistan in order to bleed the Soviet Union. (1,2,3) When that 10-year war ended over a million people were dead and Afghan heroin had captured 60% of the U.S. market. (4)

The U.S. has been responsible directly for about 12,000 deaths in Afghanistan many of which resulted from bombing in retaliation for the attacks on U.S. property on September 11, 2001. Subsequently U.S. troops invaded that country. (4)

Angola

An indigenous armed struggle against Portuguese rule in Angola began in 1961. In 1977 an Angolan government was recognized by the U.N., although the U.S. was one of the few nations that opposed this action. In 1986 Uncle Sam approved material assistance to UNITA, a group that was trying to overthrow the government. Even today this struggle, which has involved many nations at times, continues.

U.S. intervention was justified to the U.S. public as a reaction to the intervention of 50,000 Cuban troops in Angola. However, according to Piero Gleijeses, a history professor at Johns Hopkins University the reverse was true. The Cuban intervention came as a result of a CIA – financed covert invasion via neighboring Zaire and a drive on the Angolan capital by the U.S. ally, South Africa1,2,3). (Three estimates of deaths range from 300,000 to 750,000 (4,5,6)

Argentina: See South America: Operation Condor

Bangladesh: See Pakistan

Bolivia

Hugo Banzer was the leader of a repressive regime in Bolivia in the 1970s. The U.S. had been disturbed when a previous leader nationalized the tin mines and distributed land to Indian peasants. Later that action to benefit the poor was reversed.

Banzer, who was trained at the U.S.-operated School of the Americas in Panama and later at Fort Hood, Texas, came back from exile frequently to confer with U.S. Air Force Major Robert Lundin. In 1971 he staged a successful coup with the help of the U.S. Air Force radio system. In the first years of his dictatorship he received twice as military assistance from the U.S. as in the previous dozen years together.

A few years later the Catholic Church denounced an army massacre of striking tin workers in 1975, Banzer, assisted by information provided by the CIA, was able to target and locate leftist priests and nuns. His anti-clergy strategy, known as the Banzer Plan, was adopted by nine other Latin American dictatorships in 1977. (2) He has been accused of being responsible for 400 deaths during his tenure. (1)

Also see: See South America: Operation Condor

Brazil: See South America: Operation Condor

Cambodia

U.S. bombing of Cambodia had already been underway for several years in secret under the Johnson and Nixon administrations, but when President Nixon openly began bombing in preparation for a land assault on Cambodia it caused major protests in the U.S. against the Vietnam War.

There is little awareness today of the scope of these bombings and the human suffering involved.

Immense damage was done to the villages and cities of Cambodia, causing refugees and internal displacement of the population. This unstable situation enabled the Khmer Rouge, a small political party led by Pol Pot, to assume power. Over the years we have repeatedly heard about the Khmer Rouge’s role in the deaths of millions in Cambodia without any acknowledgement being made this mass killing was made possible by the the U.S. bombing of that nation which destabilized it by death , injuries, hunger and dislocation of its people.

So the U.S. bears responsibility not only for the deaths from the bombings but also for those resulting from the activities of the Khmer Rouge – a total of about 2.5 million people. Even when Vietnam latrer invaded Cambodia in 1979 the CIA was still supporting the Khmer Rouge. (1,2,3)

Also see Vietnam

Chad

An estimated 40,000 people in Chad were killed and as many as 200,000 tortured by a government, headed by Hissen Habre who was brought to power in June, 1982 with the help of CIA money and arms. He remained in power for eight years. (1,2)

Human Rights Watch claimed that Habre was responsible for thousands of killings. In 2001, while living in Senegal, he was almost tried for crimes committed by him in Chad. However, a court there blocked these proceedings. Then human rights people decided to pursue the case in Belgium, because some of Habre’s torture victims lived there. The U.S., in June 2003, told Belgium that it risked losing its status as host to NATO’s headquarters if it allowed such a legal proceeding to happen. So the result was that the law that allowed victims to file complaints in Belgium for atrocities committed abroad was repealed. However, two months later a new law was passed which made special provision for the continuation of the case against Habre.

Chile

The CIA intervened in Chile’s 1958 and 1964 elections. In 1970 a socialist candidate, Salvador Allende, was elected president. The CIA wanted to incite a military coup to prevent his inauguration, but the Chilean army’s chief of staff, General Rene Schneider, opposed this action. The CIA then planned, along with some people in the Chilean military, to assassinate Schneider. This plot failed and Allende took office. President Nixon was not to be dissuaded and he ordered the CIA to create a coup climate: “Make the economy scream,” he said.

What followed were guerilla warfare, arson, bombing, sabotage and terror. ITT and other U.S. corporations with Chilean holdings sponsored demonstrations and strikes. Finally, on September 11, 1973 Allende died either by suicide or by assassination. At that time Henry Kissinger, U.S. Secretary of State, said the following regarding Chile: “I don’t see why we need to stand by and watch a country go communist because of the irresponsibility of its own people.” (1)

During 17 years of terror under Allende’s successor, General Augusto Pinochet, an estimated 3,000 Chileans were killed and many others were tortured or “disappeared.” (2,3,4,5)

Also see South America: Operation Condor

China An estimated 900,000 Chinese died during the Korean War. For more information, See: Korea.

Colombia

One estimate is that 67,000 deaths have occurred from the 1960s to recent years due to support by the U.S. of Colombian state terrorism. (1)

According to a 1994 Amnesty International report, more than 20,000 people were killed for political reasons in Colombia since 1986, mainly by the military and its paramilitary allies. Amnesty alleged that “U.S.- supplied military equipment, ostensibly delivered for use against narcotics traffickers, was being used by the Colombian military to commit abuses in the name of “counter-insurgency.” (2) In 2002 another estimate was made that 3,500 people die each year in a U.S. funded civilian war in Colombia. (3)

In 1996 Human Rights Watch issued a report “Assassination Squads in Colombia” which revealed that CIA agents went to Colombia in 1991 to help the military to train undercover agents in anti-subversive activity. (4,5)

In recent years the U.S. government has provided assistance under Plan Colombia. The Colombian government has been charged with using most of the funds for destruction of crops and support of the paramilitary group.

Cuba

In the Bay of Pigs invasion of Cuba on April 18, 1961 which ended after 3 days, 114 of the invading force were killed, 1,189 were taken prisoners and a few escaped to waiting U.S. ships. (1) The captured exiles were quickly tried, a few executed and the rest sentenced to thirty years in prison for treason. These exiles were released after 20 months in exchange for $53 million in food and medicine.

Some people estimate that the number of Cuban forces killed range from 2,000, to 4,000. Another estimate is that 1,800 Cuban forces were killed on an open highway by napalm. This appears to have been a precursor of the Highway of Death in Iraq in 1991 when U.S. forces mercilessly annihilated large numbers of Iraqis on a highway. (2)

Democratic Republic of Congo (formerly Zaire)

The beginning of massive violence was instigated in this country in 1879 by its colonizer King Leopold of Belgium. The Congo’s population was reduced by 10 million people over a period of 20 years which some have referred to as “Leopold’s Genocide.” (1) The U.S. has been responsible for about a third of that many deaths in that nation in the more recent past. (2)

In 1960 the Congo became an independent state with Patrice Lumumba being its first prime minister. He was assassinated with the CIA being implicated, although some say that his murder was actually the responsibility of Belgium. (3) But nevertheless, the CIA was planning to kill him. (4) Before his assassination the CIA sent one of its scientists, Dr. Sidney Gottlieb, to the Congo carrying “lethal biological material” intended for use in Lumumba’s assassination. This virus would have been able to produce a fatal disease indigenous to the Congo area of Africa and was transported in a diplomatic pouch.

Much of the time in recent years there has been a civil war within the Democratic Republic of Congo, fomented often by the U.S. and other nations, including neighboring nations. (5)

In April 1977, Newsday reported that the CIA was secretly supporting efforts to recruit several hundred mercenaries in the U.S. and Great Britain to serve alongside Zaire’s army. In that same year the U.S. provided $15 million of military supplies to the Zairian President Mobutu to fend off an invasion by a rival group operating in Angola. (6)

In May 1979, the U.S. sent several million dollars of aid to Mobutu who had been condemned 3 months earlier by the U.S. State Department for human rights violations. (7) During the Cold War the U.S. funneled over 300 million dollars in weapons into Zaire (8,9) $100 million in military training was provided to him. (2) In 2001 it was reported to a U.S. congressional committee that American companies, including one linked to former President George Bush Sr., were stoking the Congo for monetary gains. There is an international battle over resources in that country with over 125 companies and individuals being implicated. One of these substances is coltan, which is used in the manufacture of cell phones. (2)

Dominican Republic

In 1962, Juan Bosch became president of the Dominican Republic. He advocated such programs as land reform and public works programs. This did not bode well for his future relationship with the U.S., and after only 7 months in office, he was deposed by a CIA coup. In 1965 when a group was trying to reinstall him to his office President Johnson said, “This Bosch is no good.” Assistant Secretary of State Thomas Mann replied “He’s no good at all. If we don’t get a decent government in there, Mr. President, we get another Bosch. It’s just going to be another sinkhole.” Two days later a U.S. invasion started and 22,000 soldiers and marines entered the Dominican Republic and about 3,000 Dominicans died during the fighting. The cover excuse for doing this was that this was done to protect foreigners there. (1,2,3,4)

East Timor

In December 1975, Indonesia invaded East Timor. This incursion was launched the day after U.S. President Gerald Ford and Secretary of State Henry Kissinger had left Indonesia where they had given President Suharto permission to use American arms, which under U.S. law, could not be used for aggression. Daniel Moynihan, U.S. ambassador to the UN. said that the U.S. wanted “things to turn out as they did.” (1,2) The result was an estimated 200,000 dead out of a population of 700,000. (1,2)

Sixteen years later, on November 12, 1991, two hundred and seventeen East Timorese protesters in Dili, many of them children, marching from a memorial service, were gunned down by Indonesian Kopassus shock troops who were headed by U.S.- trained commanders Prabowo Subianto (son in law of General Suharto) and Kiki Syahnakri. Trucks were seen dumping bodies into the sea. (5)

El Salvador

The civil war from 1981 to1992 in El Salvador was financed by $6 billion in U.S. aid given to support the government in its efforts to crush a movement to bring social justice to the people in that nation of about 8 million people. (1)

During that time U.S. military advisers demonstrated methods of torture on teenage prisoners, according to an interview with a deserter from the Salvadoran army published in the New York Times. This former member of the Salvadoran National Guard testified that he was a member of a squad of twelve who found people who they were told were guerillas and tortured them. Part of the training he received was in torture at a U.S. location somewhere in Panama. (2)

About 900 villagers were massacred in the village of El Mozote in 1981. Ten of the twelve El Salvadoran government soldiers cited as participating in this act were graduates of the School of the Americas operated by the U.S. (2) They were only a small part of about 75,000 people killed during that civil war. (1)

According to a 1993 United Nations’ Truth Commission report, over 96 % of the human rights violations carried out during the war were committed by the Salvadoran army or the paramilitary deaths squads associated with the Salvadoran army. (3)

That commission linked graduates of the School of the Americas to many notorious killings. The New York Times and the Washington Post followed with scathing articles. In 1996, the White House Oversight Board issued a report that supported many of the charges against that school made by Rev. Roy Bourgeois, head of the School of the Americas Watch. That same year the Pentagon released formerly classified reports indicating that graduates were trained in killing, extortion, and physical abuse for interrogations, false imprisonment and other methods of control. (4)

Grenada

The CIA began to destabilize Grenada in 1979 after Maurice Bishop became president, partially because he refused to join the quarantine of Cuba. The campaign against him resulted in his overthrow and the invasion by the U.S. of Grenada on October 25, 1983, with about 277 people dying. (1,2) It was fallaciously charged that an airport was being built in Grenada that could be used to attack the U.S. and it was also erroneously claimed that the lives of American medical students on that island were in danger.

Guatemala

In 1951 Jacobo Arbenz was elected president of Guatemala. He appropriated some unused land operated by the United Fruit Company and compensated the company. (1,2) That company then started a campaign to paint Arbenz as a tool of an international conspiracy and hired about 300 mercenaries who sabotaged oil supplies and trains. (3) In 1954 a CIA-orchestrated coup put him out of office and he left the country. During the next 40 years various regimes killed thousands of people.

In 1999 the Washington Post reported that an Historical Clarification Commission concluded that over 200,000 people had been killed during the civil war and that there had been 42,000 individual human rights violations, 29,000 of them fatal, 92% of which were committed by the army. The commission further reported that the U.S. government and the CIA had pressured the Guatemalan government into suppressing the guerilla movement by ruthless means. (4,5)

According to the Commission between 1981 and 1983 the military government of Guatemala – financed and supported by the U.S. government – destroyed some four hundred Mayan villages in a campaign of genocide. (4)

One of the documents made available to the commission was a 1966 memo from a U.S. State Department official, which described how a “safe house” was set up in the palace for use by Guatemalan security agents and their U.S. contacts. This was the headquarters for the Guatemalan “dirty war” against leftist insurgents and suspected allies. (2)

Haiti

From 1957 to 1986 Haiti was ruled by Papa Doc Duvalier and later by his son. During that time their private terrorist force killed between 30,000 and 100,000 people. (1) Millions of dollars in CIA subsidies flowed into Haiti during that time, mainly to suppress popular movements, (2) although most American military aid to the country, according to William Blum, was covertly channeled through Israel.

Reportedly, governments after the second Duvalier reign were responsible for an even larger number of fatalities, and the influence on Haiti by the U.S., particularly through the CIA, has continued. The U.S. later forced out of the presidential office a black Catholic priest, Jean Bertrand Aristide, even though he was elected with 67% of the vote in the early 1990s. The wealthy white class in Haiti opposed him in this predominantly black nation, because of his social programs designed to help the poor and end corruption. (3) Later he returned to office, but that did not last long. He was forced by the U.S. to leave office and now lives in South Africa.

Honduras

In the 1980s the CIA supported Battalion 316 in Honduras, which kidnapped, tortured and killed hundreds of its citizens. Torture equipment and manuals were provided by CIA Argentinean personnel who worked with U.S. agents in the training of the Hondurans. Approximately 400 people lost their lives. (1,2) This is another instance of torture in the world sponsored by the U.S. (3)

Battalion 316 used shock and suffocation devices in interrogations in the 1980s. Prisoners often were kept naked and, when no longer useful, killed and buried in unmarked graves. Declassified documents and other sources show that the CIA and the U.S. Embassy knew of numerous crimes, including murder and torture, yet continued to support Battalion 316 and collaborate with its leaders.” (4)

Honduras was a staging ground in the early 1980s for the Contras who were trying to overthrow the socialist Sandinista government in Nicaragua. John D. Negroponte, currently Deputy Secretary of State, was our embassador when our military aid to Honduras rose from $4 million to $77.4 million per year. Negroponte denies having had any knowledge of these atrocities during his tenure. However, his predecessor in that position, Jack R. Binns, had reported in 1981 that he was deeply concerned at increasing evidence of officially sponsored/sanctioned assassinations. (5)

Hungary

In 1956 Hungary, a Soviet satellite nation, revolted against the Soviet Union. During the uprising broadcasts by the U.S. Radio Free Europe into Hungary sometimes took on an aggressive tone, encouraging the rebels to believe that Western support was imminent, and even giving tactical advice on how to fight the Soviets. Their hopes were raised then dashed by these broadcasts which cast an even darker shadow over the Hungarian tragedy.“ (1) The Hungarian and Soviet death toll was about 3,000 and the revolution was crushed. (2)

Indonesia

In 1965, in Indonesia, a coup replaced General Sukarno with General Suharto as leader. The U.S. played a role in that change of government. Robert Martens,a former officer in the U.S. embassy in Indonesia, described how U.S. diplomats and CIA officers provided up to 5,000 names to Indonesian Army death squads in 1965 and checked them off as they were killed or captured. Martens admitted that “I probably have a lot of blood on my hands, but that’s not all bad. There’s a time when you have to strike hard at a decisive moment.” (1,2,3) Estimates of the number of deaths range from 500,000 to 3 million. (4,5,6)

From 1993 to 1997 the U.S. provided Jakarta with almost $400 million in economic aid and sold tens of million of dollars of weaponry to that nation. U.S. Green Berets provided training for the Indonesia’s elite force which was responsible for many of atrocities in East Timor. (3)

Iran

Iran lost about 262,000 people in the war against Iraq from 1980 to 1988. (1) See Iraq for more information about that war.

On July 3, 1988 the U.S. Navy ship, the Vincennes, was operating withing Iranian waters providing military support for Iraq during the Iran-Iraq war. During a battle against Iranian gunboats it fired two missiles at an Iranian Airbus, which was on a routine civilian flight. All 290 civilian on board were killed. (2,3)

Iraq

A. The Iraq-Iran War lasted from 1980 to 1988 and during that time there were about 105,000 Iraqi deaths according to the Washington Post. (1,2)

According to Howard Teicher, a former National Security Council official, the U.S. provided the Iraqis with billions of dollars in credits and helped Iraq in other ways such as making sure that Iraq had military equipment including biological agents This surge of help for Iraq came as Iran seemed to be winning the war and was close to Basra. (1) The U.S. was not adverse to both countries weakening themselves as a result of the war, but it did not appear to want either side to win.

B: The U.S.-Iraq War and the Sanctions Against Iraq extended from 1990 to 2003.

Iraq invaded Kuwait on August 2, 1990 and the U.S. responded by demanding that Iraq withdraw, and four days later the U.N. levied international sanctions.

Iraq had reason to believe that the U.S. would not object to its invasion of Kuwait, since U.S. Ambassador to Iraq, April Glaspie, had told Saddam Hussein that the U.S. had no position on the dispute that his country had with Kuwait. So the green light was given, but it seemed to be more of a trap.

As a part of the public relations strategy to energize the American public into supporting an attack against Iraq the daughter of the Kuwaiti ambassador to the U.S. falsely testified before Congress that Iraqi troops were pulling the plugs on incubators in Iraqi hospitals. (1) This contributed to a war frenzy in the U.S.

The U.S. air assault started on January 17, 1991 and it lasted for 42 days. On February 23 President H.W. Bush ordered the U.S. ground assault to begin. The invasion took place with much needless killing of Iraqi military personnel. Only about 150 American military personnel died compared to about 200,000 Iraqis. Some of the Iraqis were mercilessly killed on the Highway of Death and about 400 tons of depleted uranium were left in that nation by the U.S. (2,3)

Other deaths later were from delayed deaths due to wounds, civilians killed, those killed by effects of damage of the Iraqi water treatment facilities and other aspects of its damaged infrastructure and by the sanctions.

In 1995 the Food and Agriculture Organization of the U.N. reported that U.N sanctions against on Iraq had been responsible for the deaths of more than 560,000 children since 1990. (5)

Leslie Stahl on the TV Program 60 Minutes in 1996 mentioned to Madeleine Albright, U.S. Ambassador to the U.N. “We have heard that a half million children have died. I mean, that’s more children than died in Hiroshima. And – and you know, is the price worth it?” Albright replied “I think this is a very hard choice, but the price – we think is worth it.” (4)

In 1999 UNICEF reported that 5,000 children died each month as a result of the sanction and the War with the U.S. (6)

Richard Garfield later estimated that the more likely number of excess deaths among children under five years of age from 1990 through March 1998 to be 227,000 – double those of the previous decade. Garfield estimated that the numbers to be 350,000 through 2000 (based in part on result of another study). (7)

However, there are limitations to his study. His figures were not updated for the remaining three years of the sanctions. Also, two other somewhat vulnerable age groups were not studied: young children above the age of five and the elderly.

All of these reports were considerable indicators of massive numbers of deaths which the U.S. was aware of and which was a part of its strategy to cause enough pain and terror among Iraqis to cause them to revolt against their government.

C: Iraq-U.S. War started in 2003 and has not been concluded

Just as the end of the Cold War emboldened the U.S. to attack Iraq in 1991 so the attacks of September 11, 2001 laid the groundwork for the U.S. to launch the current war against Iraq. While in some other wars we learned much later about the lies that were used to deceive us, some of the deceptions that were used to get us into this war became known almost as soon as they were uttered. There were no weapons of mass destruction, we were not trying to promote democracy, we were not trying to save the Iraqi people from a dictator.

The total number of Iraqi deaths that are a result of our current Iraq against Iraq War is 654,000, of which 600,000 are attributed to acts of violence, according to Johns Hopkins researchers. (1,2)

Since these deaths are a result of the U.S. invasion, our leaders must accept responsibility for them.

Israeli-Palestinian War

About 100,000 to 200,000 Israelis and Palestinians, but mostly the latter, have been killed in the struggle between those two groups. The U.S. has been a strong supporter of Israel, providing billions of dollars in aid and supporting its possession of nuclear weapons. (1,2)

Korea, North and South

The Korean War started in 1950 when, according to the Truman administration, North Korea invaded South Korea on June 25th. However, since then another explanation has emerged which maintains that the attack by North Korea came during a time of many border incursions by both sides. South Korea initiated most of the border clashes with North Korea beginning in 1948. The North Korea government claimed that by 1949 the South Korean army committed 2,617 armed incursions. It was a myth that the Soviet Union ordered North Korea to attack South Korea. (1,2)

The U.S. started its attack before a U.N. resolution was passed supporting our nation’s intervention, and our military forces added to the mayhem in the war by introducing the use of napalm. (1)

During the war the bulk of the deaths were South Koreans, North Koreans and Chinese. Four sources give deaths counts ranging from 1.8 to 4.5 million. (3,4,5,6) Another source gives a total of 4 million but does not identify to which nation they belonged. (7)

John H. Kim, a U.S. Army veteran and the Chair of the Korea Committee of Veterans for Peace, stated in an article that during the Korean War “the U.S. Army, Air Force and Navy were directly involved in the killing of about three million civilians – both South and North Koreans – at many locations throughout Korea…It is reported that the U.S. dropped some 650,000 tons of bombs, including 43,000 tons of napalm bombs, during the Korean War.” It is presumed that this total does not include Chinese casualties.

Another source states a total of about 500,000 who were Koreans and presumably only military. (8,9)

Laos

From 1965 to 1973 during the Vietnam War the U.S. dropped over two million tons of bombs on Laos – more than was dropped in WWII by both sides. Over a quarter of the population became refugees. This was later called a “secret war,” since it occurred at the same time as the Vietnam War, but got little press. Hundreds of thousands were killed. Branfman make the only estimate that I am aware of , stating that hundreds of thousands died. This can be interpeted to mean that at least 200,000 died. (1,2,3)

U.S. military intervention in Laos actually began much earlier. A civil war started in the 1950s when the U.S. recruited a force of 40,000 Laotians to oppose the Pathet Lao, a leftist political party that ultimately took power in 1975.

Also See Vietnam

Nepal

Between 8,000 and 12,000 Nepalese have died since a civil war broke out in 1996. The death rate, according to Foreign Policy in Focus, sharply increased with the arrival of almost 8,400 American M-16 submachine guns (950 rpm) and U.S. advisers. Nepal is 85 percent rural and badly in need of land reform. Not surprisingly 42 % of its people live below the poverty level. (1,2)

In 2002, after another civil war erupted, President George W. Bush pushed a bill through Congress authorizing $20 million in military aid to the Nepalese government. (3)

Nicaragua

In 1981 the Sandinistas overthrew the Somoza government in Nicaragua, (1) and until 1990 about 25,000 Nicaraguans were killed in an armed struggle between the Sandinista government and Contra rebels who were formed from the remnants of Somoza’s national government. The use of assassination manuals by the Contras surfaced in 1984. (2,3)

The U.S. supported the victorious government regime by providing covert military aid to the Contras (anti-communist guerillas) starting in November, 1981. But when Congress discovered that the CIA had supervised acts of sabotage in Nicaragua without notifying Congress, it passed the Boland Amendment in 1983 which prohibited the CIA, Defense Department and any other government agency from providing any further covert military assistance. (4)

But ways were found to get around this prohibition. The National Security Council, which was not explicitly covered by the law, raised private and foreign funds for the Contras. In addition, arms were sold to Iran and the proceeds were diverted from those sales to the Contras engaged in the insurgency against the Sandinista government. (5) Finally, the Sandinistas were voted out of office in 1990 by voters who thought that a change in leadership would placate the U.S., which was causing misery to Nicaragua’s citizenry by it support of the Contras.

Pakistan

In 1971 West Pakistan, an authoritarian state supported by the U.S., brutally invaded East Pakistan. The war ended after India, whose economy was staggering after admitting about 10 million refugees, invaded East Pakistan (now Bangladesh) and defeated the West Pakistani forces. (1)

Millions of people died during that brutal struggle, referred to by some as genocide committed by West Pakistan. That country had long been an ally of the U.S., starting with $411 million provided to establish its armed forces which spent 80% of its budget on its military. $15 million in arms flowed into W. Pakistan during the war. (2,3,4)

Three sources estimate that 3 million people died and (5,2,6) one source estimates 1.5 million. (3)

Panama

In December, 1989 U.S. troops invaded Panama, ostensibly to arrest Manuel Noriega, that nation’s president. This was an example of the U.S. view that it is the master of the world and can arrest anyone it wants to. For a number of years before that he had worked for the CIA, but fell out of favor partially because he was not an opponent of the Sandinistas in Nicaragua. (1) It has been estimated that between 500 and 4,000 people died. (2,3,4)

Paraguay: See South America: Operation Condor

Philippines

The Philippines were under the control of the U.S. for over a hundred years. In about the last 50 to 60 years the U.S. has funded and otherwise helped various Philippine governments which sought to suppress the activities of groups working for the welfare of its people. In 1969 the Symington Committee in the U.S. Congress revealed how war material was sent there for a counter-insurgency campaign. U.S. Special Forces and Marines were active in some combat operations. The estimated number of persons that were executed and disappeared under President Fernando Marcos was over 100,000. (1,2)

South America: Operation Condor

This was a joint operation of 6 despotic South American governments (Argentina, Bolivia, Brazil, Chile, Paraguay and Uruguay) to share information about their political opponents. An estimated 13,000 people were killed under this plan. (1)

It was established on November 25, 1975 in Chile by an act of the Interamerican Reunion on Military Intelligence. According to U.S. embassy political officer, John Tipton, the CIA and the Chilean Secret Police were working together, although the CIA did not set up the operation to make this collaboration work. Reportedly, it ended in 1983. (2)

On March 6, 2001 the New York Times reported the existence of a recently declassified State Department document revealing that the United States facilitated communications for Operation Condor. (3)

Sudan

Since 1955, when it gained its independence, Sudan has been involved most of the time in a civil war. Until about 2003 approximately 2 million people had been killed. It not known if the death toll in Darfur is part of that total.

Human rights groups have complained that U.S. policies have helped to prolong the Sudanese civil war by supporting efforts to overthrow the central government in Khartoum. In 1999 U.S. Secretary of State Madeleine Albright met with the leader of the Sudan People’s Liberation Army (SPLA) who said that she offered him food supplies if he would reject a peace plan sponsored by Egypt and Libya.

In 1978 the vastness of Sudan’s oil reservers was discovered and within two years it became the sixth largest recipient of U.S, military aid. It’s reasonable to assume that if the U.S. aid a government to come to power it will feel obligated to give the U.S. part of the oil pie.

A British group, Christian Aid, has accused foreign oil companies of complicity in the depopulation of villages. These companies – not American – receive government protection and in turn allow the government use of its airstrips and roads.

In August 1998 the U.S. bombed Khartoum, Sudan with 75 cruise míssiles. Our government said that the target was a chemical weapons factory owned by Osama bin Laden. Actually, bin Laden was no longer the owner, and the plant had been the sole supplier of pharmaceutical supplies for that poor nation. As a result of the bombing tens of thousands may have died because of the lack of medicines to treat malaria, tuberculosis and other diseases. The U.S. settled a lawsuit filed by the factory’s owner. (1,2)

Uruguay: See South America: Operation Condor

Vietnam

In Vietnam, under an agreement several decades ago, there was supposed to be an election for a unified North and South Vietnam. The U.S. opposed this and supported the Diem government in South Vietnam. In August, 1964 the CIA and others helped fabricate a phony Vietnamese attack on a U.S. ship in the Gulf of Tonkin and this was used as a pretext for greater U.S. involvement in Vietnam. (1)

During that war an American assassination operation,called Operation Phoenix, terrorized the South Vietnamese people, and during the war American troops were responsible in 1968 for the mass slaughter of the people in the village of My Lai.

According to a Vietnamese government statement in 1995 the number of deaths of civilians and military personnel during the Vietnam War was 5.1 million. (2)

Since deaths in Cambodia and Laos were about 2.7 million (See Cambodia and Laos) the estimated total for the Vietnam War is 7.8 million.

The Virtual Truth Commission provides a total for the war of 5 million, (3) and Robert McNamara, former Secretary Defense, according to the New York Times Magazine says that the number of Vietnamese dead is 3.4 million. (4,5)

Yugoslavia

Yugoslavia was a socialist federation of several republics. Since it refused to be closely tied to the Soviet Union during the Cold War, it gained some suport from the U.S. But when the Soviet Union dissolved, Yugoslavia’s usefulness to the U.S. ended, and the U.S and Germany worked to convert its socialist economy to a capitalist one by a process primarily of dividing and conquering. There were ethnic and religious differences between various parts of Yugoslavia which were manipulated by the U.S. to cause several wars which resulted in the dissolution of that country.

From the early 1990s until now Yugoslavia split into several independent nations whose lowered income, along with CIA connivance, has made it a pawn in the hands of capitalist countries. (1) The dissolution of Yugoslavia was caused primarily by the U.S. (2)

Here are estimates of some, if not all, of the internal wars in Yugoslavia. All wars: 107,000; (3,4)

Bosnia and Krajina: 250,000; (5) Bosnia: 20,000 to 30,000; (5) Croatia: 15,000; (6) and

Kosovo: 500 to 5,000. (7)

continued… NOTES

Afghanistan

1.Mark Zepezauer, Boomerang (Monroe, Maine: Common Courage Press, 2003), p.135.

2.Chronology of American State Terrorism

http://www.intellnet.org/resources/american_

terrorism/ChronologyofTerror.html

3.Soviet War in Afghanistan

http://en.wikipedia.org/wiki/Soviet_war_in_Afghanistan

4.Mark Zepezauer, The CIA’S Greatest Hits (Monroe, Maine: Common Courage Press, 1994), p.76

5.U.S Involvement in Afghanistan, Wikipedia

http://en.wikipedia.org/wiki/Soviet_war_in Afghanistan)

6.The CIA’s Intervention in Afghanistan, Interview with Zbigniew Brzezinski, Le Nouvel Observateur, Paris, 15-21 January 1998, Posted at globalresearch.ca 15 October 2001, http://www.globalresearch.ca/articles/BRZ110A.html

7.William Blum, Rogue State (Monroe, Maine: Common Courage Press, 2000), p.5

8.Unknown News, http://www.unknownnews.net/casualtiesw.html

Angola

1.Howard W. French “From Old Files, a New Story of the U.S. Role in the Angolan War” New York Times 3/31/02

2.Angolan Update, American Friends Service Committee FS, 11/1/99 flyer.

3.Norman Solomon, War Made Easy, (John Wiley & Sons, 2005) p. 82-83.

4.Lance Selfa, U.S. Imperialism, A Century of Slaughter, International Socialist Review Issue 7, Spring 1999 (as appears in Third world Traveler www. thirdworldtraveler.com/American_Empire/Century_Imperialism.html)

5. Jeffress Ramsay, Africa , (Dushkin/McGraw Hill Guilford Connecticut), 1997, p. 144-145.

6.Mark Zepezauer, The CIA’S Greatest Hits (Monroe, Maine: Common Courage Press, 1994), p.54.

Argentina : See South America: Operation Condor

Bolivia

1. Phil Gunson, Guardian, 5/6/02,

http://www.guardian.co.uk/archive /article/0,4273,41-07884,00.html

2.Jerry Meldon, Return of Bolilvia’s Drug – Stained Dictator, Consortium,www.consortiumnews.com/archives/story40.html.

Brazil See South America: Operation Condor

Cambodia

1.Virtual Truth Commissiion http://www.geocities.com/~virtualtruth/ .

2.David Model, President Richard Nixon, Henry Kissinger, and the Bombing of Cambodia excerpted from the book Lying for Empire How to Commit War Crimes With A Straight Face, Common Courage Press, 2005, paperhttp://thirdworldtraveler.com/American_Empire/Nixon_Cambodia_LFE.html.

3.Noam Chomsky, Chomsky on Cambodia under Pol Pot, etc.,http//zmag.org/forums/chomcambodforum.htm.

Chad

1.William Blum, Rogue State (Monroe, Maine: Common Courage Press, 2000), p. 151-152 .

2.Richard Keeble, Crimes Against Humanity in Chad, Znet/Activism 12/4/06http://www.zmag.org/content/print_article.cfm?itemID=11560§ionID=1).

Chile

1.Parenti, Michael, The Sword and the Dollar (New York, St. Martin’s Press, 1989) p. 56.

2.William Blum, Rogue State (Monroe, Maine: Common Courage Press, 2000), p. 142-143.

3.Moreorless: Heroes and Killers of the 20th Century, Augusto Pinochet Ugarte,

http://www.moreorless.au.com/killers/pinochet.html

4.Associated Press,Pincohet on 91st Birthday, Takes Responsibility for Regimes’s Abuses, Dayton Daily News 11/26/06

5.Chalmers Johnson, Blowback, The Costs and Consequences of American Empire (New York: Henry Holt and Company, 2000), p. 18.

China: See Korea

Colombia

1.Chronology of American State Terrorism, p.2

http://www.intellnet.org/resources/american_terrorism/ChronologyofTerror.html).

2.William Blum, Rogue State (Monroe, Maine: Common Courage Press, 2000), p. 163.

3.Millions Killed by Imperialism Washington Post May 6, 2002)http://www.etext.org./Politics/MIM/rail/impkills.html

4.Gabriella Gamini, CIA Set Up Death Squads in Colombia Times Newspapers Limited, Dec. 5, 1996,www.edu/CommunicationsStudies/ben/news/cia/961205.death.html).

5.Virtual Truth Commission, 1991

Human Rights Watch Report: Colombia’s Killer Networks–The Military-Paramilitary Partnership).

Cuba

1.St. James Encyclopedia of Popular Culture – on Bay of Pigs Invasionhttp://bookrags.com/Bay_of_Pigs_Invasion.

2.Wikipedia http://bookrags.com/Bay_of_Pigs_Invasion#Casualties.

Democratic Republic of Congo (Formerly Zaire)

1.F. Jeffress Ramsey, Africa (Guilford Connecticut, 1997), p. 85

2. Anup Shaw The Democratic Republic of Congo, 10/31/2003)http://www.globalissues.org/Geopolitics/Africa/DRC.asp)

3.Kevin Whitelaw, A Killing in Congo, U. S. News and World Reporthttp://www.usnews.com/usnews/doubleissue/mysteries/patrice.htm

4.William Blum, Killing Hope (Monroe, Maine: Common Courage Press, 1995), p 158-159.

5.Ibid.,p. 260

6.Ibid.,p. 259

7.Ibid.,p.262

8.David Pickering, “World War in Africa, 6/26/02,

www.9-11peace.org/bulletin.php3

9.William D. Hartung and Bridget Moix, Deadly Legacy; U.S. Arms to Africa and the Congo War, Arms Trade Resource Center, January , 2000www.worldpolicy.org/projects/arms/reports/congo.htm

Dominican Republic

1.Norman Solomon, (untitled) Baltimore Sun April 26, 2005

http://www.globalpolicy.org/empire/history/2005/0426spincycle.htm

Intervention Spin Cycle

2.Wikipedia. http://en.wikipedia.org/wiki/Operation_Power_Pack

3.William Blum, Killing Hope (Monroe, Maine: Common Courage Press, 1995), p. 175.

4.Mark Zepezauer, The CIA’S Greatest Hits (Monroe, Maine: Common Courage Press, 1994), p.26-27.

East Timor

1.Virtual Truth Commission, http://www.geocities.com/~virtualtruth/date4.htm

2.Matthew Jardine, Unraveling Indonesia, Nonviolent Activist, 1997)

3.Chronology of American State Terrorismhttp://www.intellnet.org/resources/american_terrorism/ChronologyofTerror.html

4.William Blum, Killing Hope (Monroe, Maine: Common Courage Press, 1995), p. 197.

5.US trained butchers of Timor, The Guardian, London. Cited by The Drudge Report, September 19, 1999. http://www.geocities.com/~virtualtruth/indon.htm

El Salvador

1.Robert T. Buckman, Latin America 2003, (Stryker-Post Publications Baltimore 2003) p. 152-153.

2.William Blum, Rogue State (Monroe, Maine: Common Courage Press, 2000), p. 54-55.

3.El Salvador, Wikipediahttp://en.wikipedia.org/wiki/El_Salvador#The_20th_century_and_beyond)

4.Virtual Truth Commissiion http://www.geocities.com/~virtualtruth/.

Grenada

1.Mark Zepezauer, The CIA’S Greatest Hits (Monroe, Maine: Common Courage Press, 1994), p. 66-67.

2.Stephen Zunes, The U.S. Invasion of Grenada,http://wwwfpif.org/papers/grenada2003.html .

Guatemala

1.Virtual Truth Commissiion http://www.geocities.com/~virtualtruth/

2.Ibid.

3.Mark Zepezauer, The CIA’S Greatest Hits (Monroe, Maine: Common Courage Press, 1994), p.2-13.

4.Robert T. Buckman, Latin America 2003 (Stryker-Post Publications Baltimore 2003) p. 162.

5.Douglas Farah, Papers Show U.S. Role in Guatemalan Abuses, Washington Post Foreign Service, March 11, 1999, A 26

Haiti

1.Francois Duvalier,http://en.wikipedia.org/wiki/Fran%C3%A7ois_Duvalier#Reign_of_terror).

2.Mark Zepezauer, The CIA’S Greatest Hits (Monroe, Maine: Common Courage Press, 1994), p 87.

3.William Blum, Haiti 1986-1994: Who Will Rid Me of This Turbulent Priest,http://www.doublestandards.org/blum8.html

Honduras

1.William Blum, Rogue State (Monroe, Maine: Common Courage Press, 2000), p. 55.

2.Reports by Country: Honduras, Virtual Truth Commissionhttp://www.geocities.com/~virtualtruth/honduras.htm

3.James A. Lucas, Torture Gets The Silence Treatment, Countercurrents, July 26, 2004.

4.Gary Cohn and Ginger Thompson, Unearthed: Fatal Secrets, Baltimore Sun, reprint of a series that appeared June 11-18, 1995 in Jack Nelson-Pallmeyer, School of Assassins, p. 46 Orbis Books 2001.

5.Michael Dobbs, Negroponte’s Time in Honduras at Issue, Washington Post, March 21, 2005

Hungary

1.Edited by Malcolm Byrne, The 1956 Hungarian Revoluiton: A history in Documents November 4, 2002http://www.gwu.edu/~nsarchiv/NSAEBB/NSAEBB76/index2.htm

2.Wikipedia The Free Encyclopedia,

http://www.answers.com/topic/hungarian-revolution-of-1956

Indonesia

1.Virtual Truth Commission http://www.geocities.com/~virtualtruth/.

2.Editorial, Indonesia’s Killers, The Nation, March 30, 1998.

3.Matthew Jardine, Indonesia Unraveling, Non Violent Activist Sept–Oct, 1997 (Amnesty) 2/7/07.

4.Sison, Jose Maria, Reflections on the 1965 Massacre in Indonesia, p. 5.http://qc.indymedia.org/mail.php?id=5602;

5.Annie Pohlman, Women and the Indonesian Killings of 1965-1966: Gender Variables and Possible Direction for Research, p.4,http://coombs.anu.edu.au/SpecialProj/ASAA/biennial-conference/2004/Pohlman-A-ASAA.pdf

6.Peter Dale Scott, The United States and the Overthrow of Sukarno, 1965-1967, Pacific Affairs, 58, Summer 1985, pages 239-264.http://www.namebase.org/scott.

7.Mark Zepezauer, The CIA’S Greatest Hits (Monroe, Maine: Common Courage Press, 1994), p.30.

Iran

1.Geoff Simons, Iraq from Sumer to Saddam, 1996, St. Martins Press, NY p. 317.

2.Chronology of American State Terrorismhttp://www.intellnet.org/resources/american_terrorism/ChronologyofTerror.html.

3.BBC 1988: US Warship Shoots Down Iranian Airlinerhttp://news.bbc.co.uk/onthisday/default.stm )

Iraq

Iran-Iraq War

1.Michael Dobbs, U.S. Had Key role in Iraq Buildup, Washington Post December 30, 2002, p A01 http://www.washingtonpost.com/ac2/wp-dyn/A52241-2002Dec29?language=printer

2.Global Security.Org , Iran Iraq War (1980-1980)globalsecurity.org/military/world/war/iran-iraq.htm.

U.S. Iraq War and Sanctions

1.Ramsey Clark, The Fire This Time (New York, Thunder’s Mouth), 1994, p.31-32

2.Ibid., p. 52-54

3.Ibid., p. 43

4.Anthony Arnove, Iraq Under Siege, (South End Press Cambridge MA 2000). p. 175.

5.Food and Agricultural Organizaiton, The Children are Dying, 1995 World View Forum, Internationa Action Center, International Relief Association, p. 78

6.Anthony Arnove, Iraq Under Siege, South End Press Cambridge MA 2000. p. 61.

7.David Cortright, A Hard Look at Iraq Sanctions December 3, 2001, The Nation.

U.S-Iraq War 2003-?

1.Jonathan Bor 654,000 Deaths Tied to Iraq War Baltimore Sun , October 11,2006

2.News http://www.unknownnews.net/casualties.html

Israeli-Palestinian War

1.Post-1967 Palestinian & Israeli Deaths from Occupation & Violence May 16, 2006 http://globalavoidablemortality.blogspot.com/2006/05/post-1967-palestinian-israeli-deaths.html)

2.Chronology of American State Terrorism

http://www.intellnet.org/resources/american_terrorism/ChronologyofTerror.html

Korea

1.James I. Matray Revisiting Korea: Exposing Myths of the Forgotten War, Korean War Teachers Conference: The Korean War, February 9, 2001http://www.truman/library.org/Korea/matray1.htm

2.William Blum, Killing Hope (Monroe, Maine: Common Courage Press, 1995), p. 46

3.Kanako Tokuno, Chinese Winter Offensive in Korean War – the Debacle of American Strategy, ICE Case Studies Number 186, May, 2006http://www.american.edu/ted/ice/chosin.htm.

4.John G. Stroessinger, Why Nations go to War, (New York; St. Martin’s Press), p. 99)

5.Britannica Concise Encyclopedia, as reported in Answers.comhttp://www.answers.com/topic/Korean-war

6.Exploring the Environment: Korean Enigmawww.cet.edu/ete/modules/korea/kwar.html)

7.S. Brian Wilson, Who are the Real Terrorists? Virtual Truth Commissonhttp://www.geocities.com/~virtualtruth/

8.Korean War Casualty Statistics www.century china.com/history/krwarcost.html)

9.S. Brian Wilson, Documenting U.S. War Crimes in North Korea (Veterans for Peace Newsletter) Spring, 2002) http://www.veteransforpeace.org/

Laos

1.William Blum Rogue State (Maine, Common Cause Press) p. 136

2.Chronology of American State Terrorismhttp://www.intellnet.org/resources/american_terrorism/ChronologyofTerror.html

3.Fred Branfman, War Crimes in Indochina and our Troubled National Soul

www.wagingpeace.org/articles/2004/08/00_branfman_us-warcrimes-indochina.htm).

Nepal

1.Conn Hallinan, Nepal & the Bush Administration: Into Thin Air, February 3, 2004

fpif.org/commentary/2004/0402nepal.html.

2.Human Rights Watch, Nepal’s Civil War: the Conflict Resumes, March 2006 )

http://hrw.org/english/docs/2006/03/28/nepal13078.htm.

3.Wayne Madsen, Possible CIA Hand in the Murder of the Nepal Royal Family, India Independent Media Center, September 25, 2001http://india.indymedia.org/en/2002/09/2190.shtml.

Nicaragua

1.Virtual Truth Commission

http://www.geocities.com/~virtualtruth/.

2.Timeline Nicaragua

www.stanford.edu/group/arts/nicaragua/discovery_eng/timeline/).

3.Chronology of American State Terrorism,

http://www.intellnet.org/resources/american_terrorism/ChronologyofTerror.html.

4.William Blum, Nicaragua 1981-1990 Destabilization in Slow Motion

www.thirdworldtraveler.com/Blum/Nicaragua_KH.html.

5.Wikipedia, the Free Encyclopedia,

http://en.wikipedia.org/wiki/Iran-Contra_Affair.

Pakistan

1.John G. Stoessinger, Why Nations Go to War, (New York: St. Martin’s Press), 1974 pp 157-172.

2.Asad Ismi, A U.S. – Financed Military Dictatorship, The CCPA Monitor, June 2002, Canadian Centre for Policy Alternatives http://www.policyaltematives.ca)www.ckln.fm/~asadismi/pakistan.html

3.Mark Zepezauer, Boomerang (Monroe, Maine: Common Courage Press, 2003), p.123, 124.

4.Arjum Niaz ,When America Look the Other Way by,

www.zmag.org/content/print_article.cfm?itemID=2821§ionID=1

5.Leo Kuper, Genocide (Yale University Press, 1981), p. 79.

6.Bangladesh Liberation War , Wikipedia, the Free Encyclopediahttp://en.wikipedia.org/wiki/Bangladesh_Liberation_War#USA_and_USSR)

Panama

1.Mark Zepezauer, The CIA’s Greatest Hits, (Odonian Press 1998) p. 83.

2.William Blum, Rogue State (Monroe, Maine: Common Courage Press, 2000), p.154.

3.U.S. Military Charged with Mass Murder, The Winds 9/96,www.apfn.org/thewinds/archive/war/a102896b.html

4.Mark Zepezauer, CIA’S Greatest Hits (Monroe, Maine: Common Courage Press, 1994), p.83.

Paraguay See South America: Operation Condor

Philippines

1.Romeo T. Capulong, A Century of Crimes Against the Filipino People, Presentation, Public Interest Law Center, World Tribunal for Iraq Trial in New York City on August 25,2004.

http://www.peoplejudgebush.org/files/RomeoCapulong.pdf).

2.Roland B. Simbulan The CIA in Manila – Covert Operations and the CIA’s Hidden Hisotry in the Philippines Equipo Nizkor Information – Derechos, derechos.org/nizkor/filipinas/doc/cia.

South America: Operation Condor

1.John Dinges, Pulling Back the Veil on Condor, The Nation, July 24, 2000.

2.Virtual Truth Commission, Telling the Truth for a Better Americawww.geocities.com/~virtualtruth/condor.htm)

3.Operation Condorhttp://en.wikipedia.org/wiki/Operation_Condor#US_involvement).

Sudan

1.Mark Zepezauer, Boomerang, (Monroe, Maine: Common Courage Press, 2003), p. 30, 32,34,36.

2.The Black Commentator, Africa Action The Tale of Two Genocides: The Failed US Response to Rwanda and Darfur, 11 August 2006http://www.truthout.org/docs_2006/091706X.shtml.

Uruguay See South America: Operation Condor

Vietnam

1.Mark Zepezauer, The CIA’S Greatest Hits (Monroe, Maine:Common Courage Press,1994), p 24

2.Casualties – US vs NVA/VC,

http://www.rjsmith.com/kia_tbl.html.

3.Brian Wilson, Virtual Truth Commission

http://www.geocities.com/~virtualtruth/

4.Fred Branfman, U.S. War Crimes in Indochiona and our Duty to Truth August 26, 2004

www.zmag.org/content/print_article.cfm?itemID=6105§ionID=1

5.David K Shipler, Robert McNamara and the Ghosts of Vietnamnytimes.com/library/world/asia/081097vietnam-mcnamara.html

Yugoslavia

1.Sara Flounders, Bosnia Tragedy:The Unknown Role of the Pentagon in NATO in the Balkans (New York: International Action Center) p. 47-75

2.James A. Lucas, Media Disinformation on the War in Yugoslavia: The Dayton Peace Accords Revisited, Global Research, September 7, 2005 http://www.globalresearch.ca/index.php?context=

viewArticle&code=LUC20050907&articleId=899

3.Yugoslav Wars in 1990s

http://en.wikipedia.org/wiki/Yugoslav_wars.

4.George Kenney, The Bosnia Calculation: How Many Have Died? Not nearly as many as some would have you think., NY Times Magazine, April 23, 1995

http://www.balkan-archive.org.yu/politics/

war_crimes/srebrenica/bosnia_numbers.html)

5.Chronology of American State Terrorism

http://www.intellnet.org/resources/american_terrorism/

ChronologyofTerror.html.

6.Croatian War of Independence, Wikipedia

http://en.wikipedia.org/wiki/Croatian_War_of_Independence

7.Human Rights Watch, New Figures on Civilian Deaths in Kosovo War, (February 7, 2000) http://www.hrw.org/press/2000/02/nato207.htm.

U.S. War Crimes and The Need To Recognize The Psychology Of Evil

Also Carl Herman:

“Recognized facts of US wars:

No nation’s government attacked the US on 9/11. The US acknowledges the Afghanistan government had nothing to do with 9/11. The UN Security Council issued two Resolutions after 9/11 (1368 and 1373) for international cooperation for factual discovery, arrests, and prosecutions of the 9/11 criminals.

The Afghan government said they would arrest any suspect upon presentation of evidence of criminal involvement. The US rejected these Resolutions, and violated the letter and intent of the UN Charter by armed attack and invasion of Afghanistan.

For more detail, I recommend International Law Professor Dr. Francis Boyle’s “End the crime that is the war on Afghanistan.” The US government acknowledges Iraq had nothing to do with 9/11. The UN Security Council issued a standing cease fire that no single nation could violate by resuming armed attacks. The UN Security Council also resolved for weapons inspections that were nearly complete when the US violated the cease fire, weapons inspections, and letter and intent of the UN Charter with armed attack.”

The Issue is Not Trump, It is Us – John Pilger

join us on the social media

Veterans for Peace Madison

Clarence Kailin, Chapter 25

facebook… https://www.facebook.com/groups/madisonvfp

twitter… https://twitter.com/MadisonVfp

@MadisonVfp